Page 43 - Data Science Algorithms in a Week

P. 43

28 Edwin Cortes, Luis Rabelo and Gene Lee

Cascading antimessage explosions can occur when events are close to the current

GVT. Because events processed far ahead of the rest of the simulation will likely be

rolled back, it might be better for those runaway events to not immediately release their

messages. On the other hand, using TW as an initial condition to bring BTB reduces the

frequency of synchronizations and increases the size of the bucket.

The process of BTW is explained as follows:

1. The first simulation events processed locally on each node beyond GVT release

their messages right away as in TW. After that, messages are held back and the

BTW starts execution.

2. When the events of the entire cycle are processed, or when the event horizon is

determined, each node requests a GVT update. If a node ever processes more

events beyond GVT, it temporarily stops processing events until the next GVT

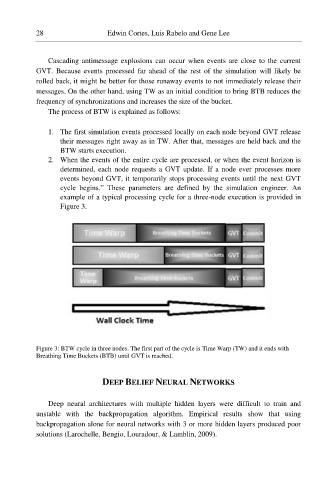

cycle begins.” These parameters are defined by the simulation engineer. An

example of a typical processing cycle for a three-node execution is provided in

Figure 3.

Figure 3: BTW cycle in three nodes. The first part of the cycle is Time Warp (TW) and it ends with

Breathing Time Buckets (BTB) until GVT is reached.

DEEP BELIEF NEURAL NETWORKS

Deep neural architectures with multiple hidden layers were difficult to train and

unstable with the backpropagation algorithm. Empirical results show that using

backpropagation alone for neural networks with 3 or more hidden layers produced poor

solutions (Larochelle, Bengio, Louradour, & Lamblin, 2009).