Page 42 - FINAL CFA II SLIDES JUNE 2019 DAY 2

P. 42

LOS 8.h: Distinguish between and interpret the READING 8: MULTIPLE REGRESSION AND ISSUES IN REGRESSION ANALYSIS

2

2

R and adjusted R in multiple regression.

MODULE 8.4: COEFFICIENT OF DETERMINATION & ADJUSTED R-SQUARED

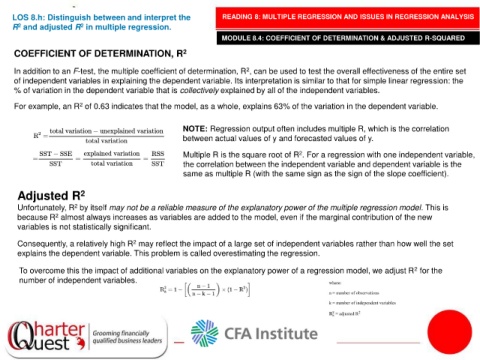

COEFFICIENT OF DETERMINATION, R 2

In addition to an F-test, the multiple coefficient of determination, R , can be used to test the overall effectiveness of the entire set

2

of independent variables in explaining the dependent variable. Its interpretation is similar to that for simple linear regression: the

% of variation in the dependent variable that is collectively explained by all of the independent variables.

2

For example, an R of 0.63 indicates that the model, as a whole, explains 63% of the variation in the dependent variable.

NOTE: Regression output often includes multiple R, which is the correlation

between actual values of y and forecasted values of y.

Multiple R is the square root of R . For a regression with one independent variable,

2

the correlation between the independent variable and dependent variable is the

same as multiple R (with the same sign as the sign of the slope coefficient).

Adjusted R 2

Unfortunately, R by itself may not be a reliable measure of the explanatory power of the multiple regression model. This is

2

because R almost always increases as variables are added to the model, even if the marginal contribution of the new

2

variables is not statistically significant.

2

Consequently, a relatively high R may reflect the impact of a large set of independent variables rather than how well the set

explains the dependent variable. This problem is called overestimating the regression.

2

To overcome this the impact of additional variables on the explanatory power of a regression model, we adjust R for the

number of independent variables.